Patient journey personalization is no longer a “nice to have” in pharma. Patients expect relevant education, smoother enrollment, and support that adjusts to where they are emotionally and clinically, while brand teams need measurable lift without adding operational chaos.

This article is for US pharma brand teams, omnichannel leads, commercial ops, marketing ops, and CRM owners, plus agencies supporting HCP engagement and patient support programs. You will learn practical ways AI can improve orchestration and “next best action” decisioning, how to keep personalization compliance-aware, and the most common pitfalls that slow teams down or create risk.

What “patient journey personalization” means in pharma

In a pharma context, personalization is the disciplined matching of the right message, channel, and timing to a patient’s needs, preferences, and eligibility. It is not simply adding a first name to an email or building dozens of micro-segments that no one can maintain.

A useful working definition is: personalization = consistent, consent-aware decisions across touchpoints. That includes patient education, sign-ups, adherence program personalization, and patient support programs such as nurse outreach or affordability navigation.

Personalization needs to be “journey-first,” not channel-first

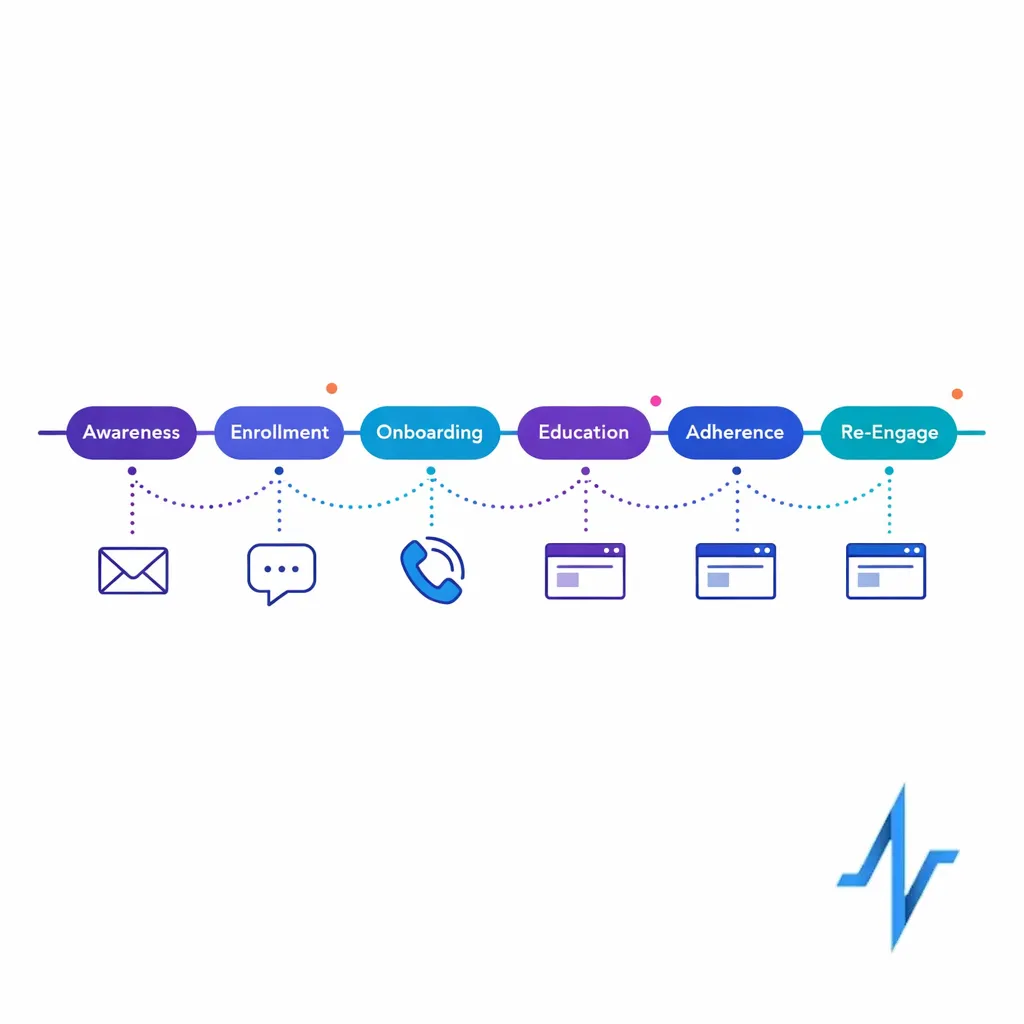

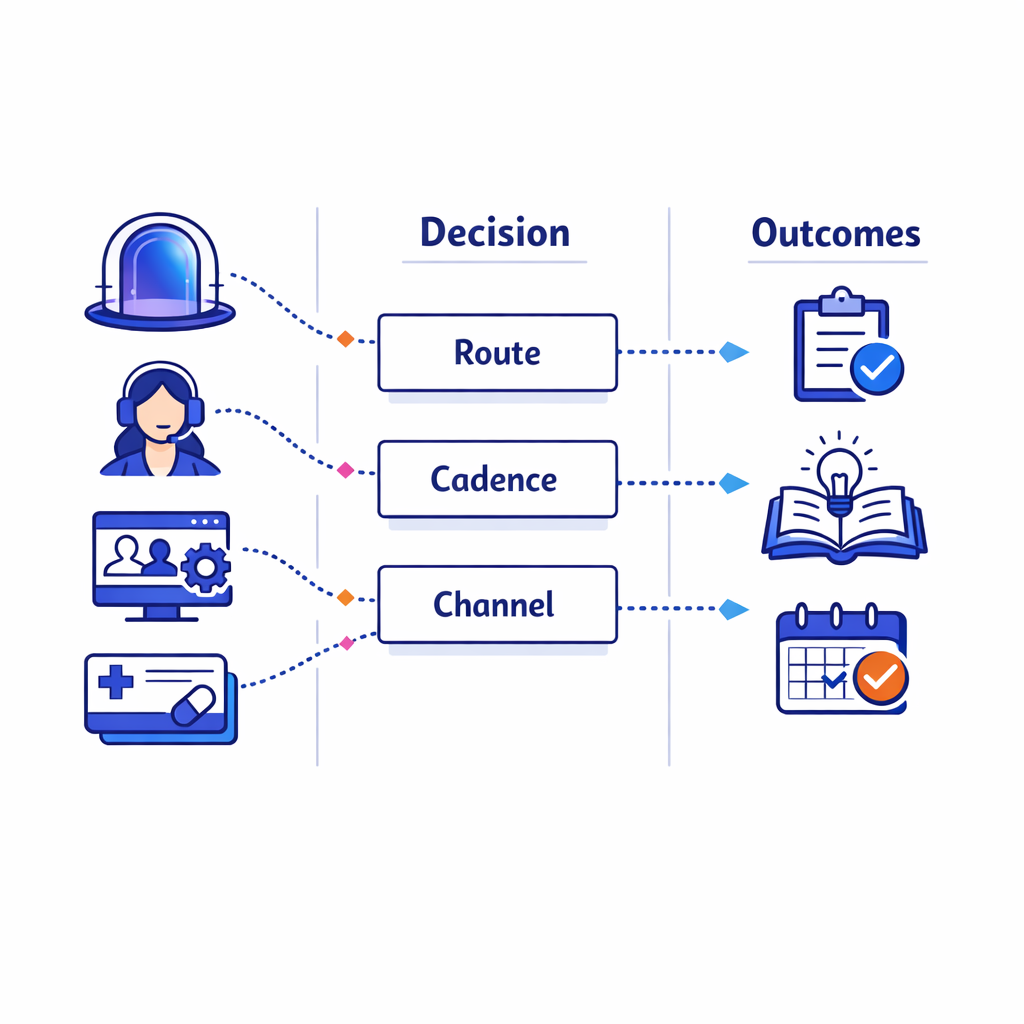

A journey-first orchestration view coordinates channels and timing across touchpoints so patients receive coherent, non‑conflicting outreach.

Teams often optimize one channel at a time (email, SMS, call center) and unintentionally create a disjointed experience. AI in pharma marketing is most effective when it helps coordinate decisions across channels, so a patient does not receive redundant or conflicting outreach.

That coordination requires a shared view of the journey stages (for example: awareness, enrollment, onboarding, ongoing education, adherence and persistence, re-engagement). Even if your data is imperfect, a journey map gives you a stable structure for measurement and continuous improvement.

Where AI actually helps (and where it does not)

AI is most valuable when it reduces decision friction and operational work, not when it tries to “out-create” your medical-legal-regulatory (MLR) process. The highest-impact use cases typically look like decisioning, orchestration, and measurement improvements rather than fully automated content generation.

Use cases that tend to work well

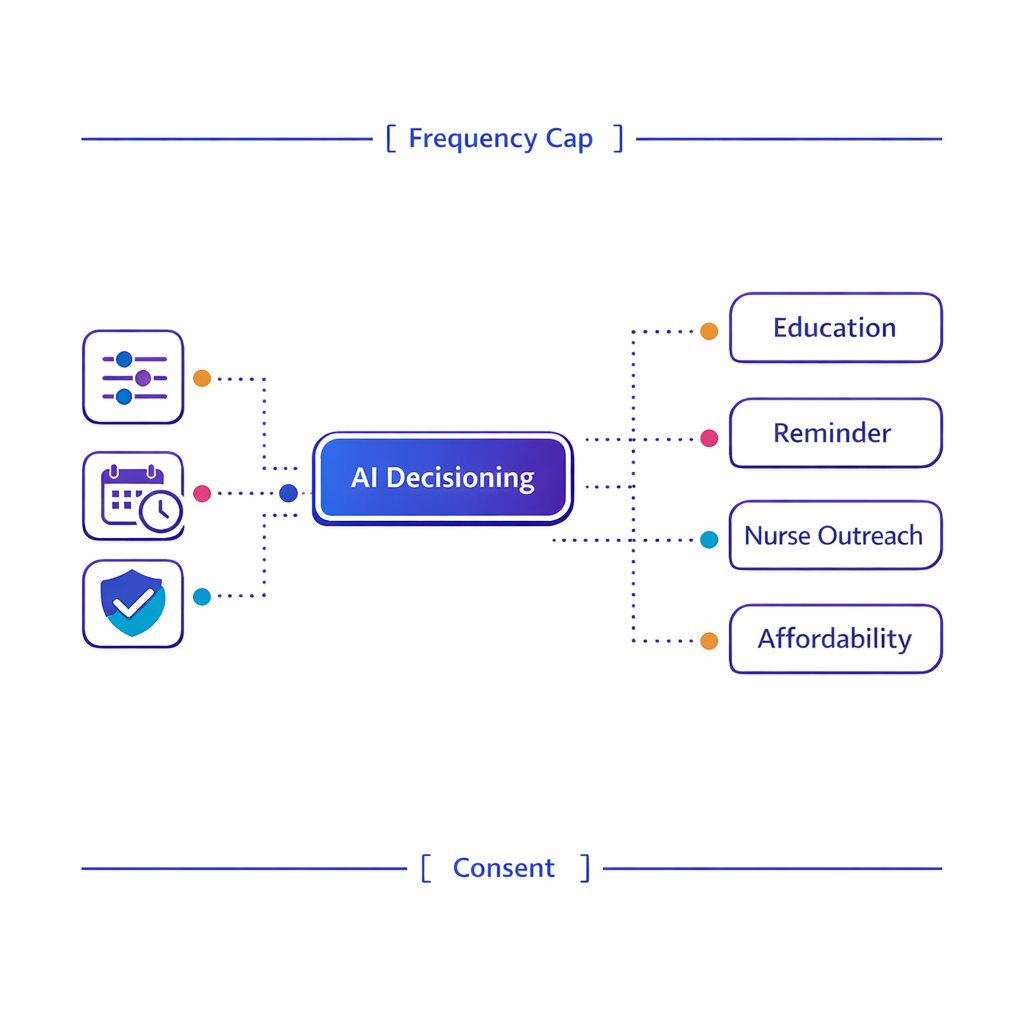

Decision trees and next‑best‑action logic can reduce manual routing and ensure actions obey clinical and consent guardrails.

- Next best action healthcare decisioning: selecting the next step (educational message, nurse outreach, reminder, affordability option) based on patient signals and business rules.

- Journey orchestration: coordinating timing across email, SMS, portal, call center, and field or virtual support so the patient experiences one coherent plan.

- Eligibility and routing: directing patients to the right pathway (education vs enrollment vs affordability vs nurse support) using verified attributes and clear guardrails.

- Operational automation: summarizing interactions for agents, triaging inbound requests, and reducing manual CRM work (with human review where needed).

- Measurement and experimentation: recommending what to test next and monitoring lift, drop-offs, and friction points through holdouts and controlled experiments.

Use cases that usually create avoidable risk

Personalization fails when teams use AI to bypass governance. For regulated programs, generating new claims or “ad-like” messages on the fly can be difficult to review consistently and can increase the chance of noncompliant presentation of benefit and risk.

Instead, AI should primarily select from pre-approved modules, determine cadence, and recommend channels based on consent and preferences. If you do apply generative AI, keep it constrained to approved language and enforce review workflows.

What changed and what’s new: why personalization is getting harder (and more important)

AI-driven personalization is accelerating, but scrutiny of health data use is also increasing. Three changes are shaping how pharma teams should design patient journey personalization now.

1) Tighter expectations around tracking and data disclosure

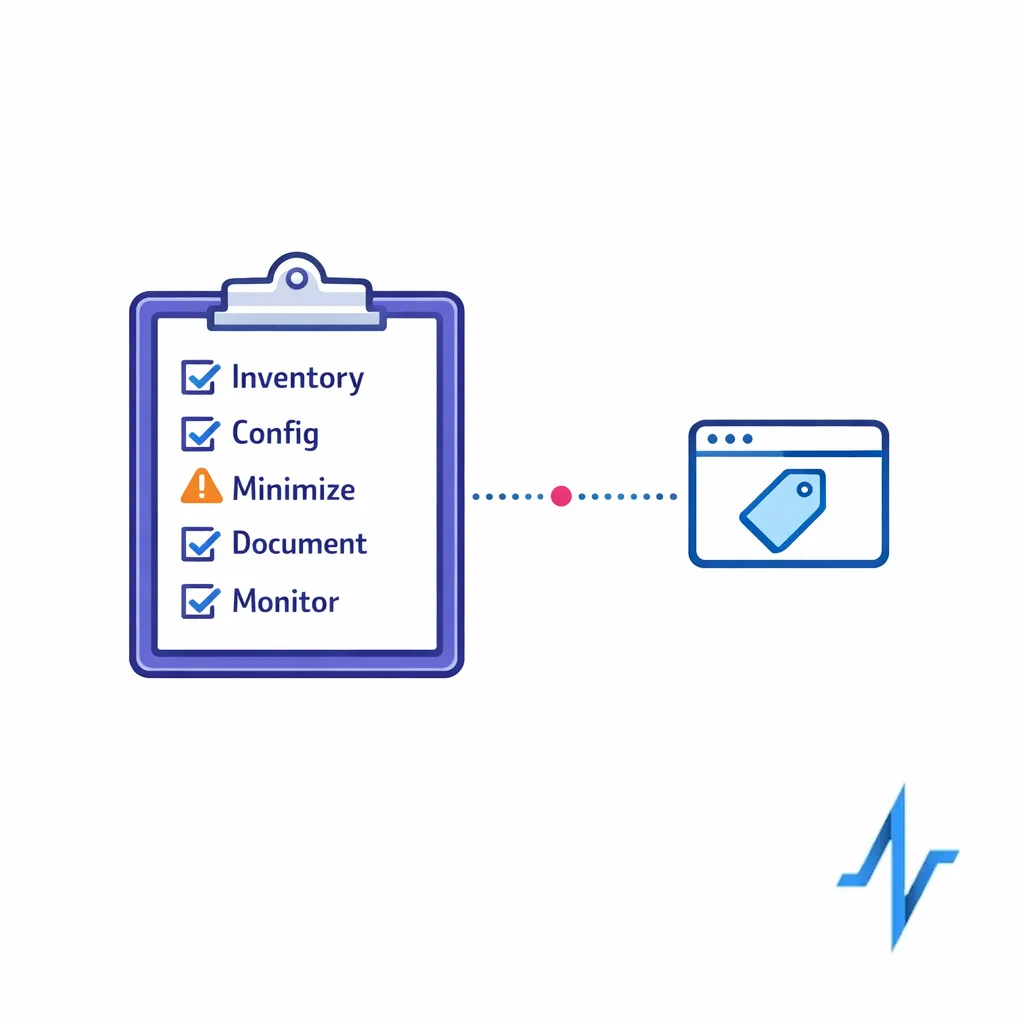

Use a tracking-technology risk checklist to inventory tags, confirm configurations, and minimize outbound health‑related identifiers.

Regulators have been explicit that common web and app tracking patterns can create sensitive disclosures when they transmit health-related identifiers and event data. The HHS OCR guidance on HIPAA and online tracking technologies is frequently used as a reference point for how to evaluate pixels, tags, and analytics configurations in health contexts.

Even when HIPAA does not directly apply to a specific brand or program, the guidance is a practical risk checklist: what data is leaving your environment, who receives it, and whether you can justify it as necessary for the patient experience.

2) More enforcement pressure around health data incidents

Organizations supporting patient engagement platforms, patient support programs, and digital experiences should understand breach-notification obligations that may apply depending on their role and data type. The FTC Health Breach Notification Rule is one key reference for certain health data holders outside of HIPAA-covered entities and includes requirements for notifying consumers following specific types of breaches.

This matters for personalization because identity and measurement are often the first places where “shadow” data flows appear. If you cannot inventory where patient-related signals go, you cannot reliably govern AI decisioning.

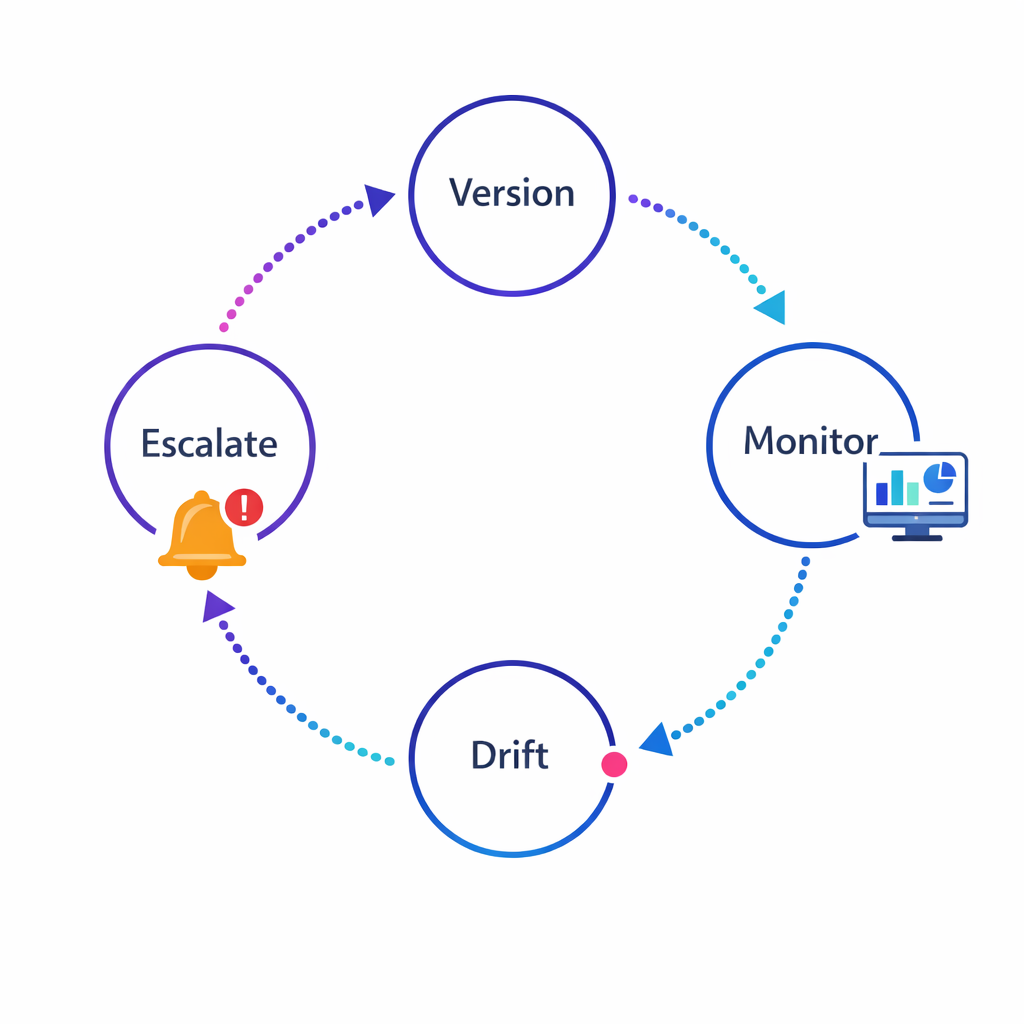

3) AI governance is becoming a baseline expectation

Build an AI governance lifecycle that includes model versioning, monitoring, drift checks, and clear escalation paths.

Teams are being asked to explain how AI decisions are made, what risks exist, and how they are monitored. The NIST AI Risk Management Framework (AI RMF 1.0) is a widely referenced approach for mapping, measuring, and managing AI risks such as validity, reliability, bias, transparency, and accountability.

In practice, “AI governance” for omnichannel personalization is less about one document and more about repeatable controls: audit trails, monitoring, escalation paths, and a clear model lifecycle.

What works: proven patterns for compliant, high-lift AI personalization

The most successful AI programs in pharma marketing treat personalization as a system. They combine data discipline, content discipline, and operational discipline so that AI improves speed and relevance without creating compliance churn.

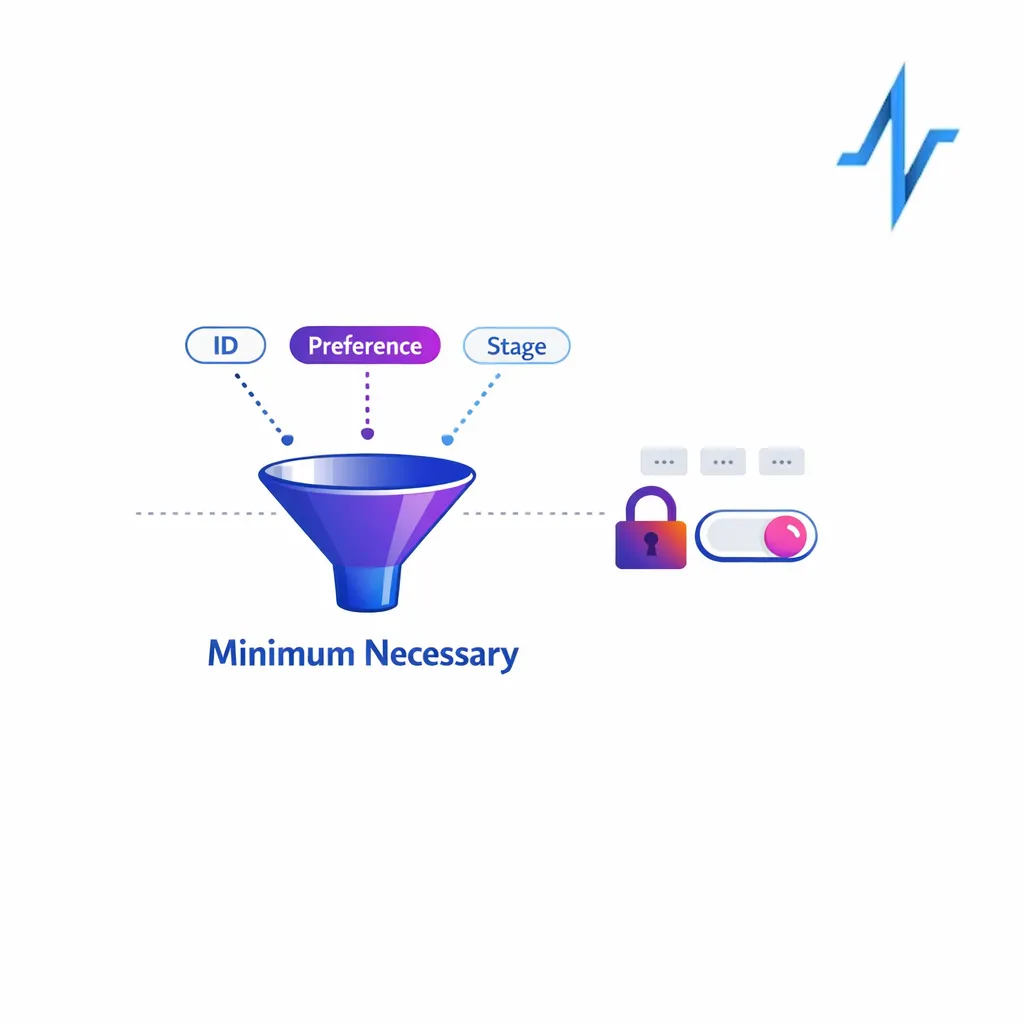

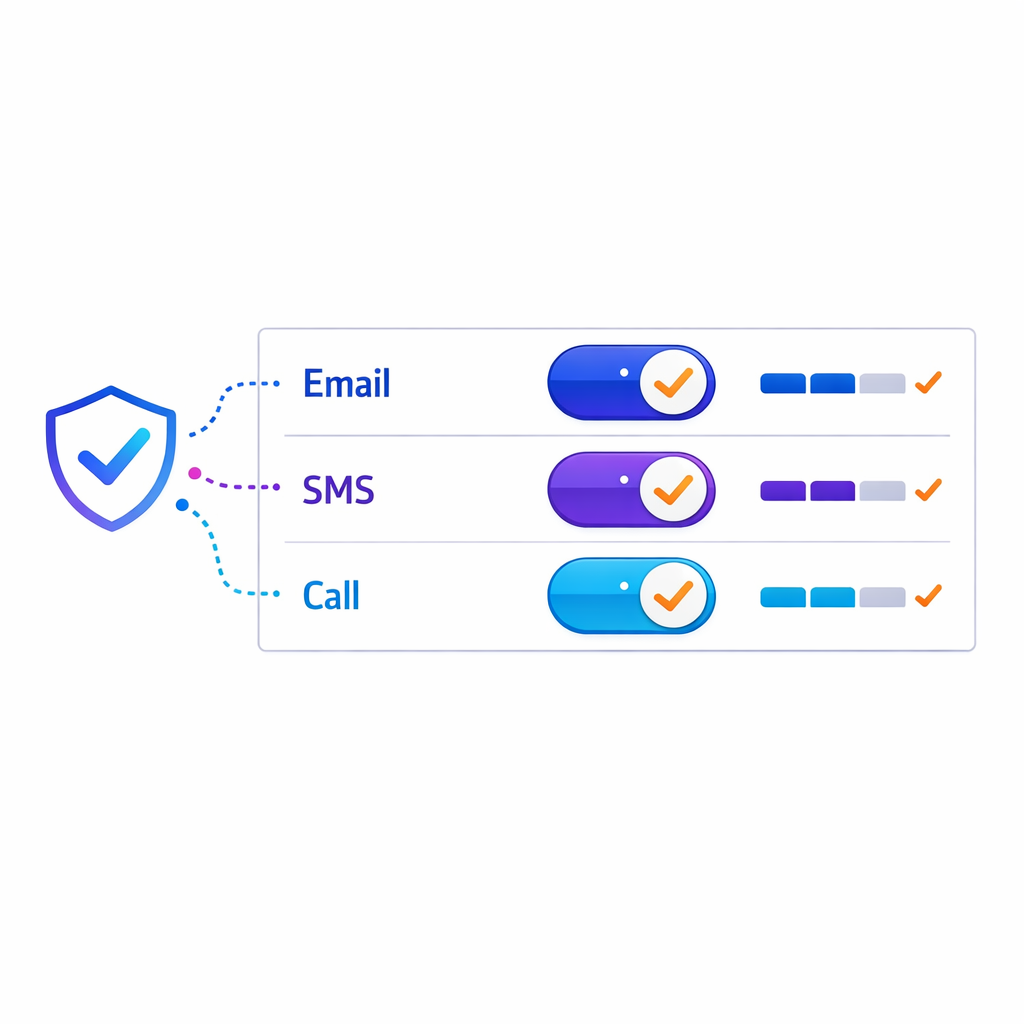

1) Start with consent, purpose, and data minimization

Begin with clear consent, defined purpose, and a minimum‑necessary mindset for each data element used in personalization.

Personalization should begin with a clear statement of why you are collecting each data element and how it improves the patient experience. If your workflows involve protected health information from a covered entity or business associate, align requirements with the HIPAA Privacy Rule and define permitted uses and disclosures before you build models and audiences.

Even outside HIPAA, a “minimum necessary” mindset reduces the risk of over-collection and makes measurement easier. It also helps you explain personalization choices to internal stakeholders and partners.

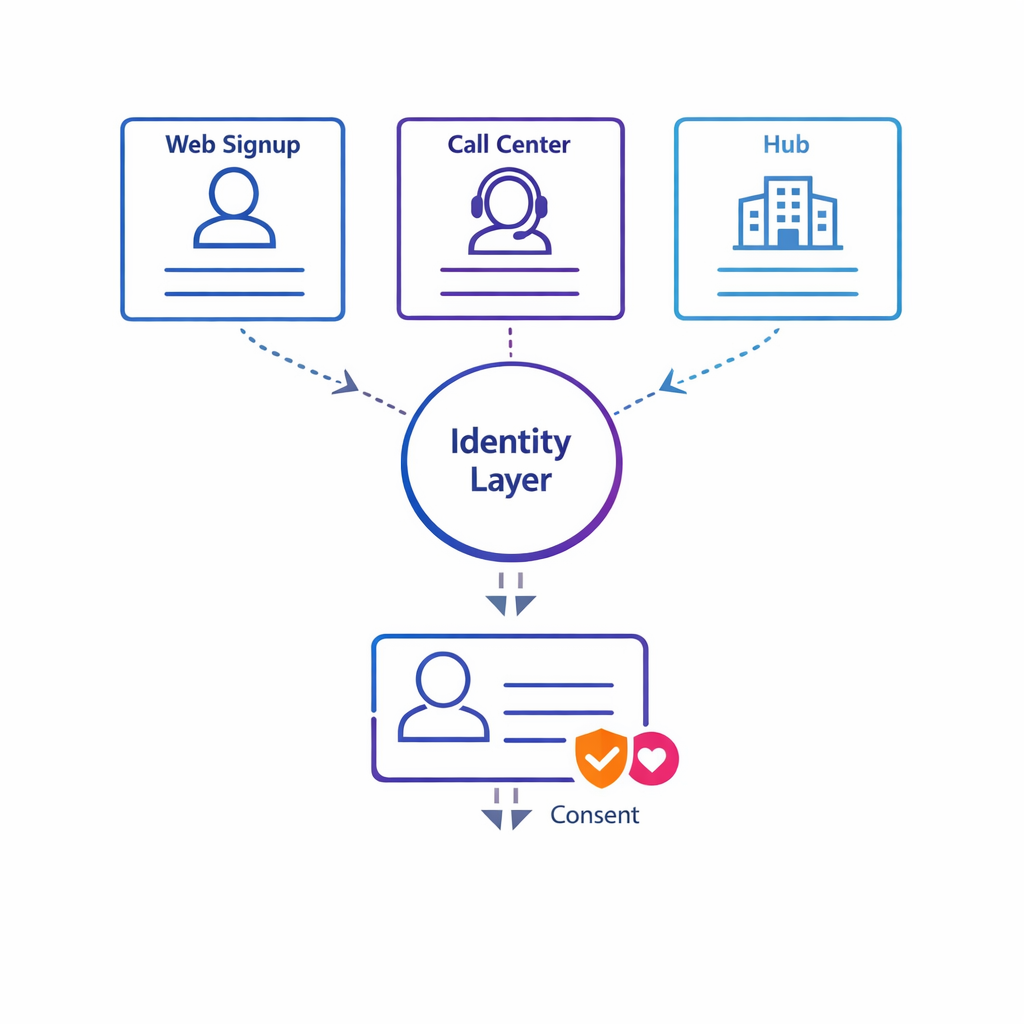

2) Build a compliant identity layer before you build “smart journeys”

Invest in a consent-aware identity layer so AI decisions operate on unified, reliable patient records rather than fragmented profiles.

Most personalization complexity is identity complexity: a patient who signs up via a hub, calls a nurse line, visits a program site, and interacts with emails can appear as multiple profiles. When teams skip identity foundations, AI makes decisions on fragmented records and creates inconsistent outreach.

A robust approach typically includes deterministic matching where possible, clear rules for merges and splits, and consent-aware identity resolution for measurement. This is also where you should define which identifiers can be used for which purpose, and where they can be stored.

3) Use AI for selection and sequencing, not “free-form claims”

Prefer AI that selects and sequences MLR‑approved modules over systems that generate free‑form claims requiring constant review.

AI performs best when it selects from controlled options, not when it generates new language that needs constant review. A practical pattern is: MLR-approved content modules + AI-driven assembly + channel-specific templates.

When you operate in digital environments with tight space constraints, you also need to ensure the experience can support appropriate presentation of benefit and risk. The FDA guidance on character-space-limited platforms is a helpful reference for thinking about how to present risk and benefit information when space is constrained.

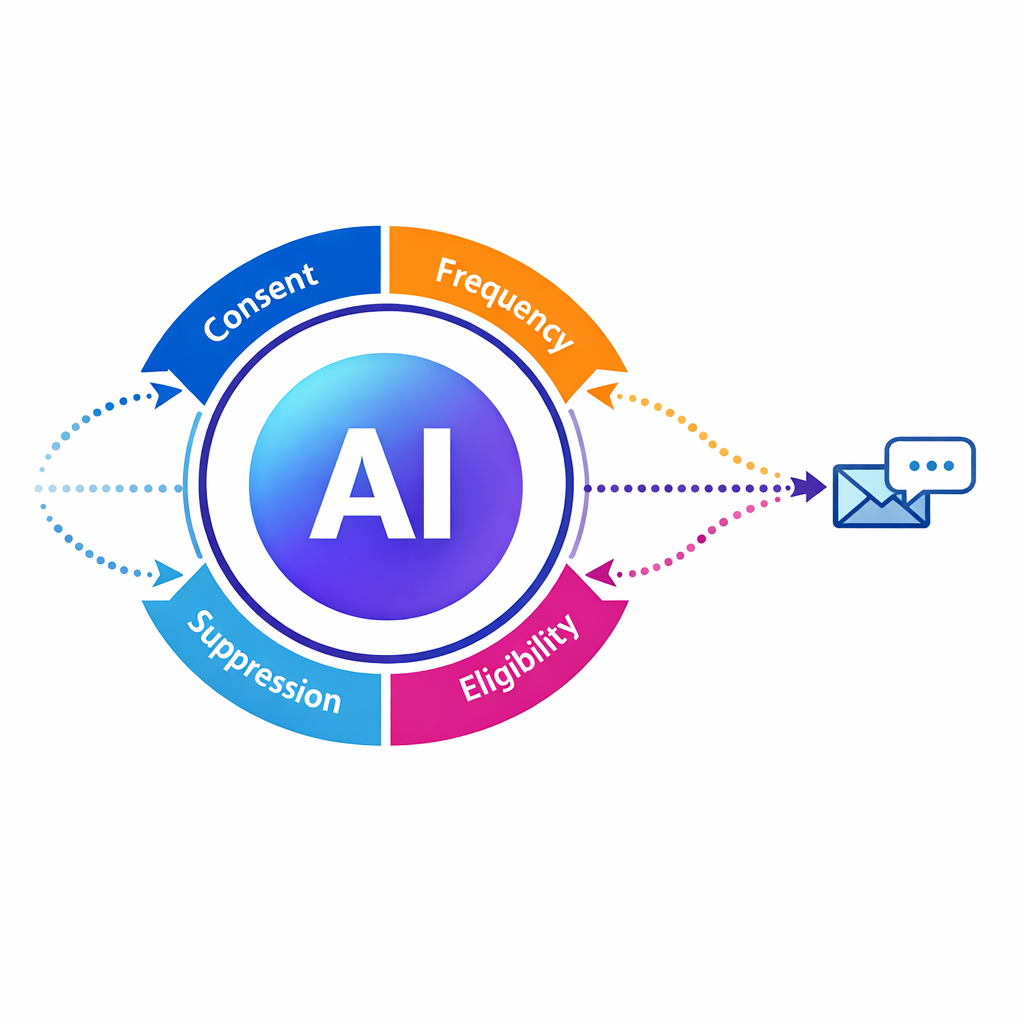

4) Combine AI with explicit business rules and safety guardrails

Layer explicit business rules and safety guardrails around AI decisions to ensure clinical appropriateness and compliance.

The goal is not to let AI “run the program.” The goal is to let AI improve decisions within guardrails that reflect brand strategy, clinical appropriateness, and compliance requirements.

Common guardrails include contact frequency limits, channel permissions, suppression logic for sensitive moments, escalation triggers for nurse outreach, and rule-based eligibility checks for enrollment steps.

5) Design for auditability from day one

Make decision logs a standard part of the system so you can reconstruct why a patient received a specific message at a given time.

In pharma, you need to answer: “Why did this patient receive this message at this time?” If you cannot reconstruct the decision path, you will struggle to scale personalization across brands and agencies.

Make decision logs a standard part of the system: inputs used, consent status, model version, rule overrides, and the content modules selected. This reduces MLR friction and improves troubleshooting when metrics change.

6) Treat security controls as part of the personalization product

Treat security controls—least privilege, encryption, vendor review—as part of the personalization product, not an afterthought.

AI personalization increases the number of systems that touch patient-related signals, which increases attack surface and operational risk. If HIPAA security requirements apply in your ecosystem, map safeguards to the HIPAA Security Rule and implement the administrative, physical, and technical controls that match your data flows.

Regardless of whether HIPAA applies, use the same discipline: least-privilege access, environment separation, vendor risk review, encryption where appropriate, and strong incident response playbooks.

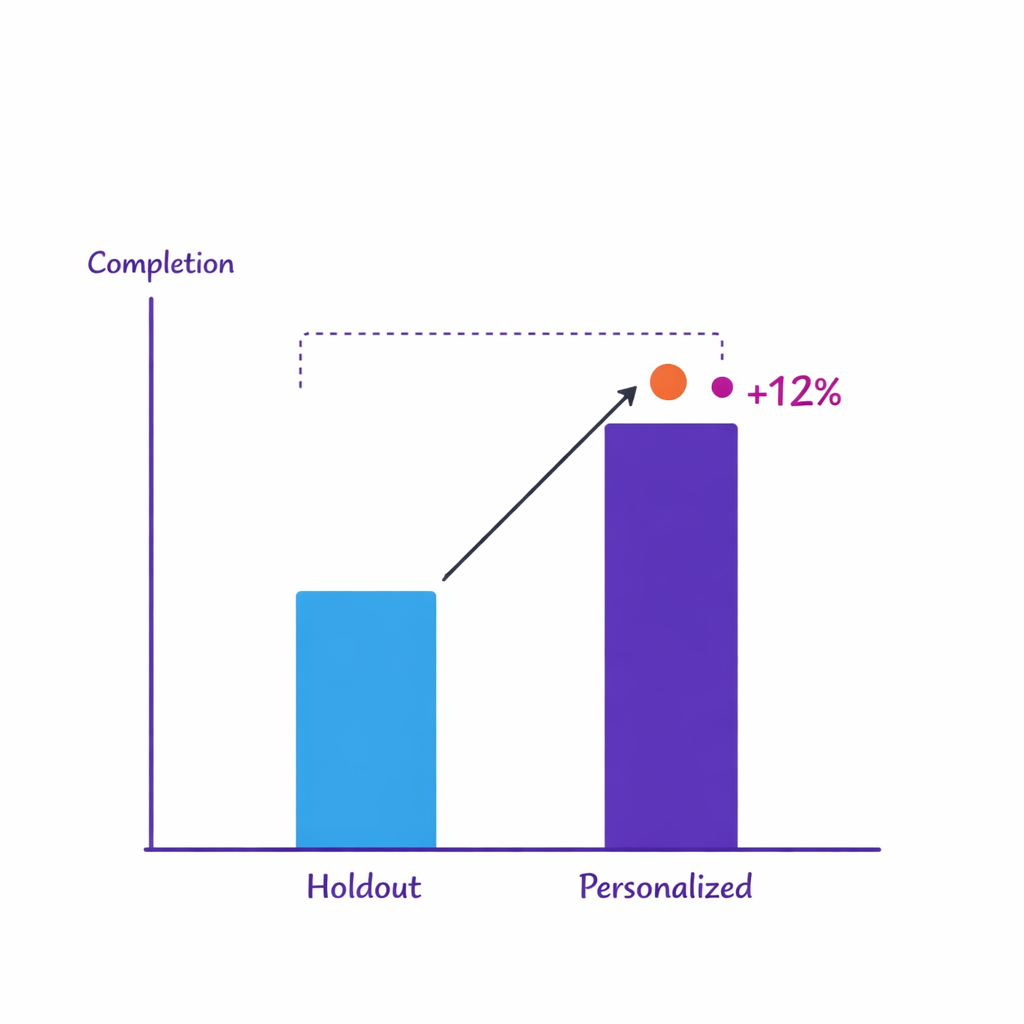

7) Measure outcomes with holdouts, not just engagement

Measure causality with holdouts and controlled experiments to prove true lift rather than relying solely on engagement metrics.

Clicks and opens can be useful diagnostics, but they are not the reason patient programs exist. Strong personalization programs define outcome metrics by stage, such as reduced time-to-enrollment, improved completion of education steps, or better persistence signals.

Use holdout groups and controlled tests to distinguish true lift from “more activity.” AI can help prioritize which journeys and segments to test next, but your measurement design must be intentional.

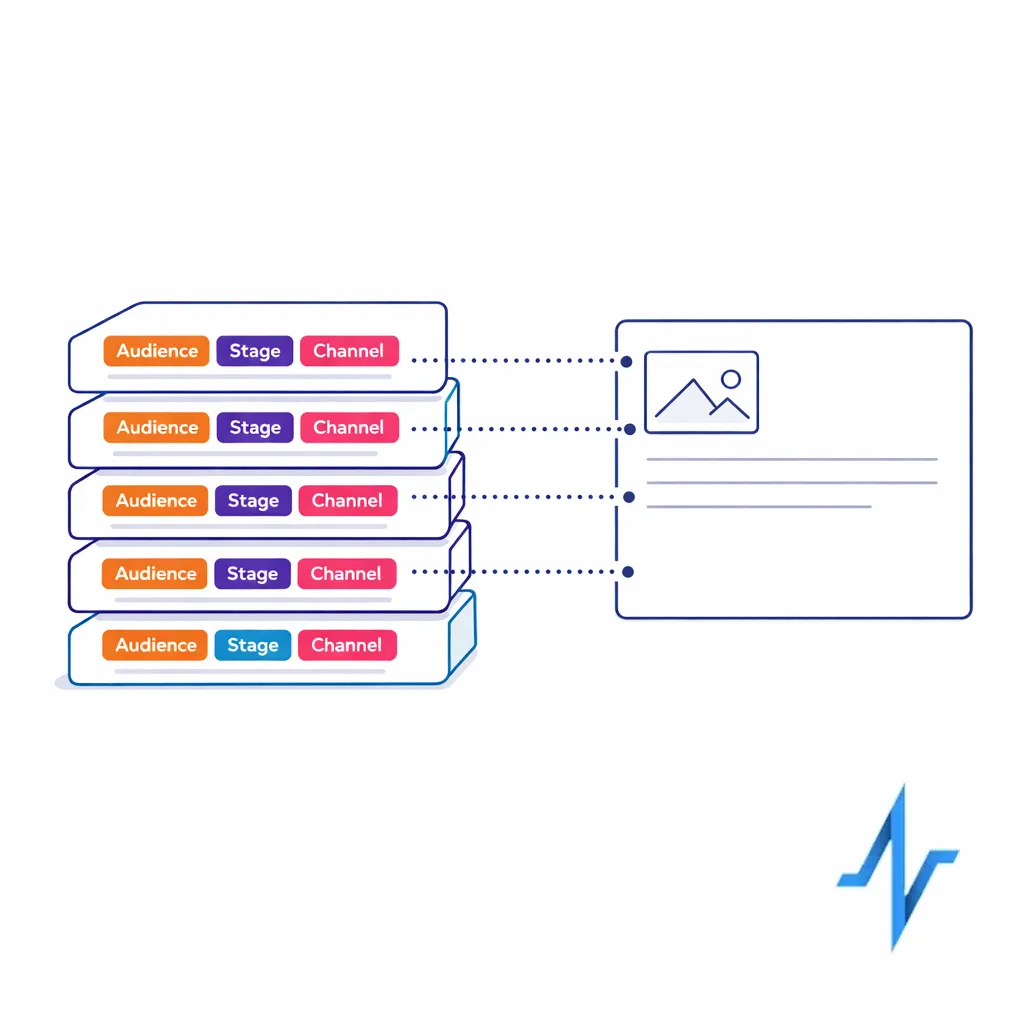

8) Operationalize with a clear “content-to-journey” supply chain

Operationalize with a content-to-journey supply chain so modular assets and metadata keep pace with journey requirements.

Personalization only scales when content operations keep pace. That means modular assets, consistent metadata (indication, audience, stage, channel constraints), and a release process that does not require rebuilding journeys every time content changes.

When agencies and internal teams collaborate on the same journeys, shared metadata and standardized review checkpoints prevent rework and reduce the risk of inconsistent experiences.

What to avoid: common mistakes that derail AI personalization

Most failures are not “bad models.” They are avoidable design choices that create compliance uncertainty, identity chaos, or measurement noise.

Mistake 1: Treating AI as a shortcut around MLR

If your AI generates novel claims, improvises tone, or varies risk language across channels, teams spend more time explaining what happened than improving performance. Use AI to improve orchestration and relevance, and keep language constrained to approved modules and templates.

When generative AI is used, narrow the scope to tasks like summarization for internal users, content tagging, or drafting within pre-approved language blocks that are still reviewed.

Mistake 2: Over-personalizing sensitive signals in the wrong channel

Avoid revealing inferred sensitive details in open channels; personalize the path and use authenticated experiences for sensitive content.

Patients may welcome personalized support, but they also notice when brands appear to “know too much,” especially in open or shared channels. A safer pattern is to personalize the path (offer the next step, ask preferences, provide options) rather than revealing inferred sensitive details in outbound messages.

When in doubt, let the patient “pull” sensitive content through authenticated experiences where consent and context are clearer.

Mistake 3: Letting measurement drive hidden data sharing

Identity + measurement is where programs quietly accumulate risk. If you deploy analytics or advertising tags without fully understanding what is transmitted, you can accidentally disclose sensitive signals.

Use the HHS OCR tracking technologies guidance as a practical checklist: inventory tools, confirm configurations, minimize outbound data, and document your rationale and controls.

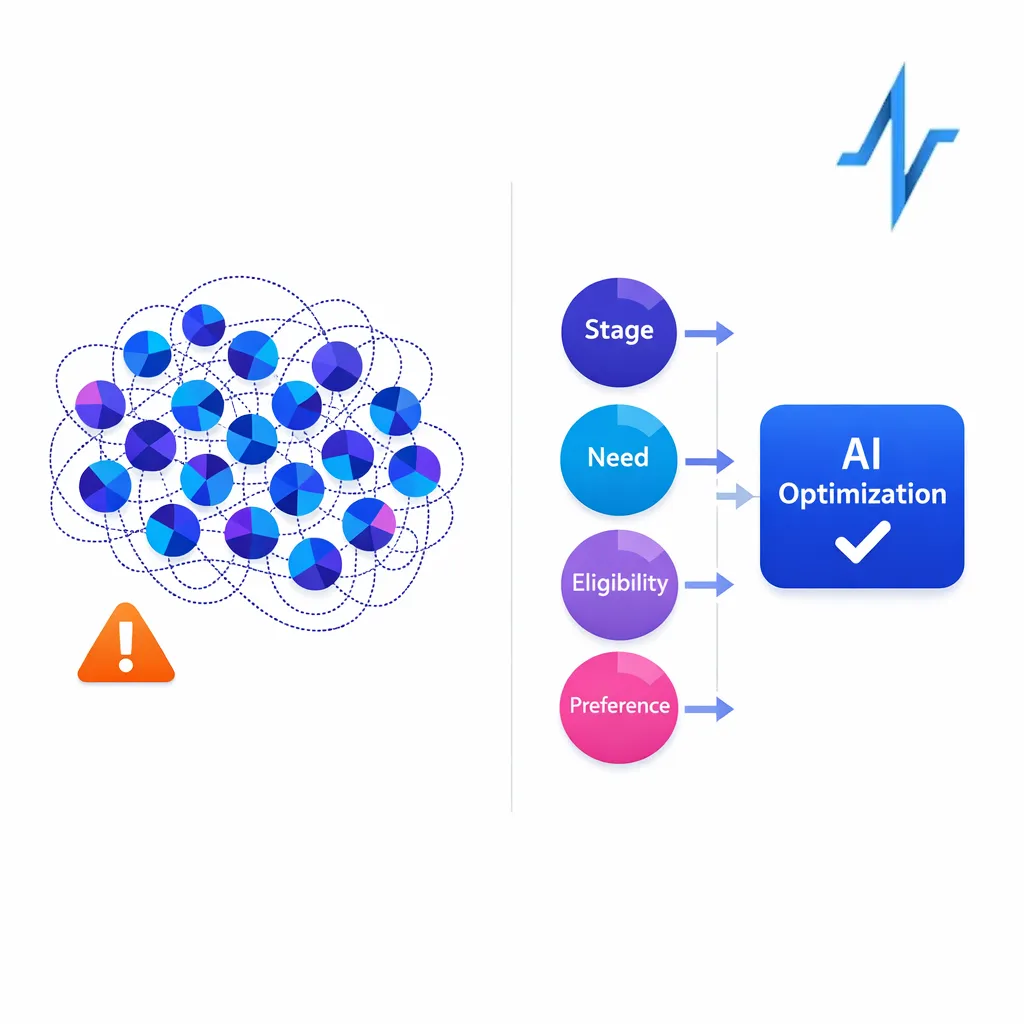

Mistake 4: Confusing “more segmentation” with better personalization

Creating dozens of brittle micro-segments increases operational complexity; keep segments broad and let AI optimize within them.

Creating dozens of segments often looks sophisticated, but it can produce brittle journeys that break when data changes or when operations cannot maintain them. AI personalization should reduce complexity by improving decisions dynamically, not create a taxonomy that only one analyst understands.

A better approach is to keep segments broad (stage, need, eligibility, preference) and let AI optimize within those boundaries.

Mistake 5: Using black-box models with no ability to explain decisions

If stakeholders cannot understand what inputs matter and how decisions are made, adoption slows and governance becomes reactive. Align your approach to transparency and monitoring with frameworks like the NIST AI Risk Management Framework so that model risk management is built in, not bolted on.

Practically, that means versioning models, monitoring drift, testing for unintended bias, and having an escalation path when outputs look wrong.

Misconceptions that slow teams down

“We need perfect data before we can personalize.”

You need reliable data for a few high-value decisions, not perfect data everywhere. Start with one or two journeys (for example, enrollment completion and early adherence) and define the minimal signals required to improve those stages.

“AI personalization means we have to build everything ourselves.”

Most teams get better outcomes by combining configurable orchestration, strong integration patterns, and focused AI decisioning rather than custom-building a full stack. The key is to ensure your platform choices support auditability, consent-aware identity, and workflow integration for ops teams.

“Personalization is only a patient thing.”

In many brands, HCP engagement and patient engagement are intertwined, but they are not the same journey. Treat HCP orchestration, patient education/sign-ups, and support program outreach as connected but governed streams with clear audience separation and appropriate content controls.

A practical blueprint: how to implement AI personalization without creating compliance churn

If you want personalization that survives beyond one campaign, build it as a repeatable operating model. The blueprint below is designed for brand teams and omnichannel leads who need both performance and control.

A concise, repeatable blueprint helps brand teams implement AI personalization while minimizing compliance churn.

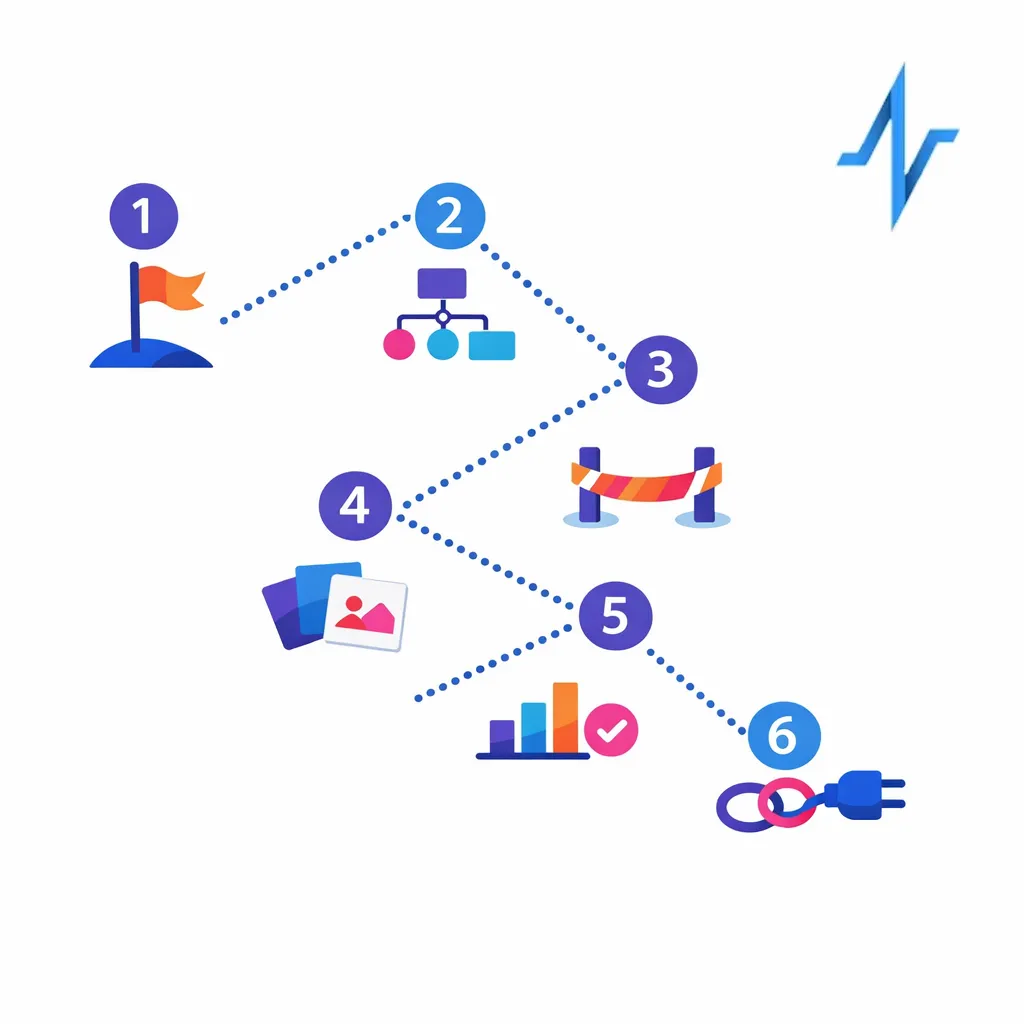

Step 1: Pick one journey and define “lift” in operational terms

Choose a journey where personalization can remove friction, such as sign-up completion, education progression, or adherence support. Define lift in concrete terms: reduced time-to-enrollment, higher completion rates for key steps, fewer redundant touches, or improved agent productivity.

Step 2: Map data sources to decisions (not to dashboards)

Map each decision to the minimal data required and document where inputs come from and what permissions apply.

List the decisions you want to improve (for example: “send reminder vs nurse outreach,” “offer affordability pathway,” “pause outreach after support interaction”). Then map the minimum data required to make each decision safely and consistently.

Document where each input comes from and what permissions apply. Where HIPAA applies, align the use with the HIPAA Privacy Rule and ensure security controls meet the HIPAA Security Rule.

Step 3: Create a modular content library with metadata

Build modular assets with consistent metadata so AI can assemble approved modules across channels and stages.

Personalization needs content that can be assembled reliably. Break content into modules that can be reused across channels and stages, and tag modules with the metadata your decisioning engine needs (audience, stage, channel constraints, language variants).

For constrained channels, design templates that can consistently support appropriate disclosure. Keep the FDA guidance on character-space-limited platforms in mind when planning experiences that must balance benefit and risk communication.

Step 4: Implement orchestration with guardrails and decision logs

Configure frequency caps, channel permissions, suppression rules, and decision logs to build operational trust.

Operational trust is built when stakeholders can see and control what the system is doing. Configure frequency caps, channel permissions, suppression rules, and escalation triggers, and log every decision so it can be audited and improved.

Make “why this happened” visible to ops teams, not just data scientists.

Step 5: Build measurement that can prove causality

Use holdouts and controlled experiments to measure true lift. Track outcomes by stage, plus operational metrics like agent load, time-to-resolution, and content review cycle time.

Ensure your measurement design does not introduce ungoverned tracking. Revisit the OCR tracking technologies guidance principles when evaluating analytics tools and configurations.

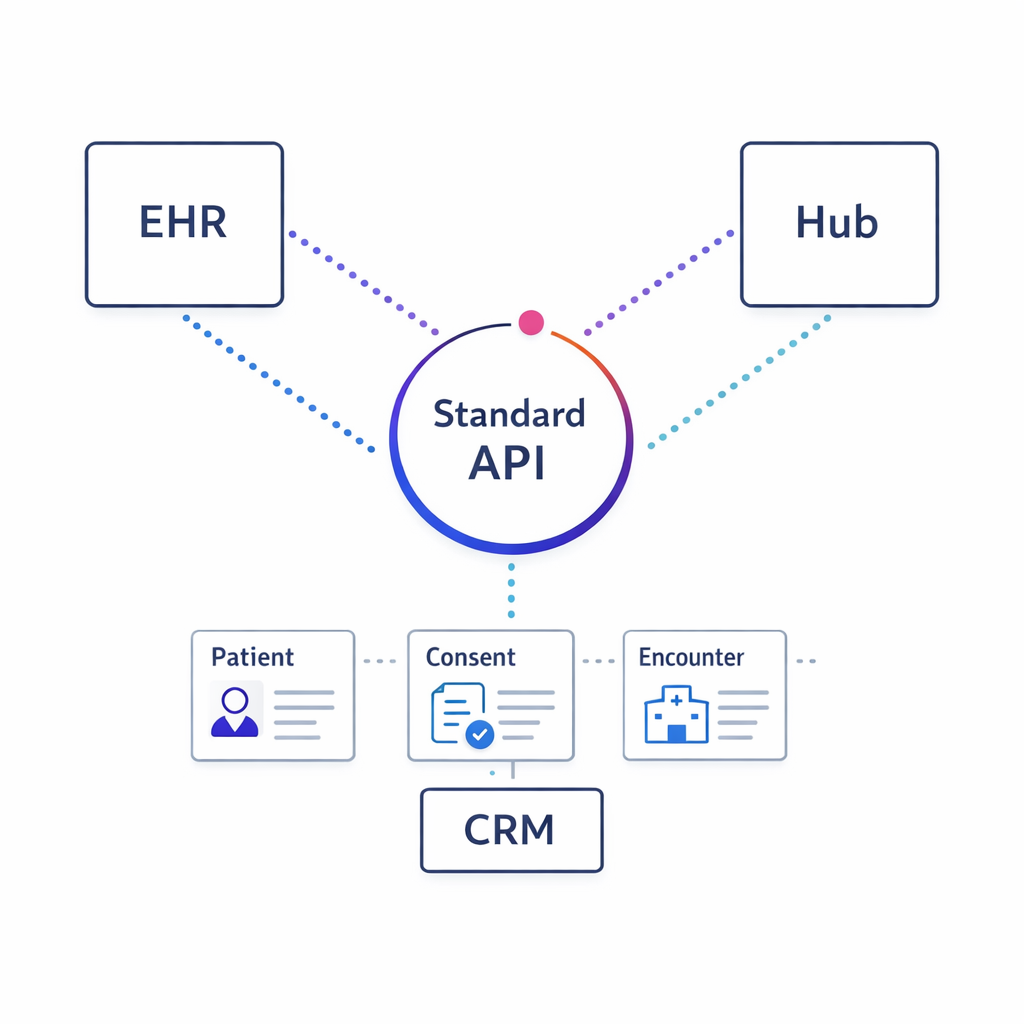

Step 6: Plan integrations for real-world workflows

Plan integrations with CRM, hubs, and contact-center tools; where clinical exchange is relevant, use standards like FHIR to simplify interoperability.

Personalization becomes sustainable when it integrates with CRM, hub services, contact center tools, and data environments. Where clinical or care-adjacent data exchange is relevant, standards such as HL7 FHIR can simplify interoperability patterns and reduce one-off integration work.

Even if you are not integrating directly with an EHR, adopting consistent data models and interface contracts will reduce long-term maintenance.

What to do next: a checklist for brand and omnichannel teams

- Choose one patient journey to personalize first and define 2–3 outcome metrics that matter (not just engagement).

- Inventory data flows for identity, orchestration, and measurement, and document what goes to which vendors.

- Confirm consent and permissions for each channel and each use of patient signals, and apply data minimization.

- Design MLR-friendly modular content so AI can select and sequence approved pieces instead of generating new claims.

- Implement guardrails such as frequency caps, channel suppression rules, and escalation triggers for nurse outreach.

- Require decision logging so every outreach can be explained and audited.

- Set up testing with holdouts to measure causality and avoid confusing activity with impact.

- Establish AI governance (model versioning, monitoring, and escalation) aligned to the NIST AI RMF.

- Review tracking and analytics configurations using the OCR tracking technologies guidance as a practical checklist.

Request a Demo: see compliant AI personalization in practice with Pulse Health

If your team is trying to scale patient journey personalization without multiplying journeys, vendors, and review cycles, it helps to see how orchestration, identity, and measurement fit together in one operating model. Talk to Pulse Health to walk through a real journey and discuss where AI can drive measurable lift while staying compliance-aware.

If you are evaluating options, bring one target journey and your current channel mix. You can also ask about ways to Explore Integrations, See How It Works, or Get the Platform Overview so commercial ops and CRM owners can assess fit alongside brand stakeholders.